December 12, 2022

No, Google Did Not Hike the Price of a .dev Domain from $12 to $850

It was perfect outrage fodder, quickly gaining hundreds of upvotes on Hacker News:

As you know, domain extensions like .dev and .app are owned by Google. Last year, I bought the http://forum.dev domain for one of our projects. When I tried to renew it this year, I was faced with a renewal price of $850 instead of the normal price of $12.

It's true that most .dev domains are just $12/year. But this person never paid $12 for forum.dev. According to his own screenshots, he paid 4,360 Turkish Lira for the initial registration on December 6, 2021, which was $317 at the time. So yes, the price did go up, but not nearly as much as the above comment implied.

According to a Google worker, this person should have paid the same, higher price in 2021, since forum.dev is a "premium" domain, but got an extremely favorable exchange rate so he ended up paying less. That's unsurprising for a currency which is experiencing rampant inflation.

Nevertheless, domain pricing has become quite confusing in recent years, and when reading the ensuing Hacker News discussion, I learned that a lot of people have some major misconceptions about how domains work. Multiple people said untrue or nonsensical things along the lines of "Google has a monopoly on the .dev domain. GoDaddy doesn't have a monopoly on .com, .biz, .net, etc." So I decided to write this blog post to explain some basic concepts and demystify domain pricing.

Registries vs Registrars

If you want to have an informed opinion about domains, you have to understand the difference between registries and registrars.

Every top-level domain (.com, .biz, .dev, etc.) is controlled by exactly one registry, who is responsible for the administration of the TLD and operation of the TLD's DNS servers. The registry effectively owns the TLD. Some registries are:

| .com | Verisign |

|---|---|

| .biz | GoDaddy |

| .dev |

Registries do not sell domains directly to the public. Instead, registrars broker the transaction between a domain registrant and the appropriate registry. Registrars include Gandi, GoDaddy, Google, Namecheap, and name.com. Companies can be both registries and registrars (Technically, they're required to be separate legal entities; e.g. Google's registry is the wholly-owned subsidiary Charleston Road Registry): e.g. GoDaddy and Google are registrars for many TLDs, but registries for only some TLDs.

When you buy or renew a domain, the bulk of your registration fee goes to the registry, with the registrar adding some markup. Additionally, 18 cents goes to ICANN (Internet Corporation for Assigned Names and Numbers), who is in charge of the entire domain system.

For example, Google's current .com price of $12 is broken down as follows:

| $0.18 | ICANN fee |

|---|---|

| $8.97 | Verisign's registry fee |

| $2.85 | Google's registrar markup |

Registrars typically carry domains from many different TLDs, and TLDs are typically available through multiple registrars. If you don't like your registrar, you can transfer your domain to a different one. This keeps registrar markup low. However, you'll always be stuck with the same registry. If you don't like their pricing, your only recourse is to get a whole new domain with a different TLD, which is not meaningful competition.

At the registry level, it's not true that there is no monopoly on .com - Verisign has just as much of a monopoly on .com as Google has on .dev.

At the registrar level, Google holds no monopoly over .dev - you can buy .dev domains through registrars besides Google, so you can take your business elsewhere if you don't like the Google registrar. Of course, the bulk of your fee will still go to Google, since they're the registry.

ICANN Price Controls

So if .com is just as monopoly-controlled as .dev, why are all .com domains the same low price? Why are there no "premium" domains like with .dev?

It's not because Verisign is scared by the competition, since there is none. It's because Verisign's contract with ICANN is different from Google's contract with ICANN.

The .com registry agreement between Verisign and ICANN capped the price of .com domains at $7.85 in 2020, with at most a 7% increase allowed every year. Verisign has since imposed two 7% price hikes, putting the current price at $8.97.

In contrast, .dev is governed by ICANN's standard registry agreement, which has no price caps. It does, however, forbid "discriminatory" renewal pricing:

In addition, Registry Operator must have uniform pricing for renewals of domain name registrations ("Renewal Pricing"). For the purposes of determining Renewal Pricing, the price for each domain registration renewal must be identical to the price of all other domain name registration renewals in place at the time of such renewal, and such price must take into account universal application of any refunds, rebates, discounts, product tying or other programs in place at the time of renewal. The foregoing requirements of this Section 2.10(c) shall not apply for (i) purposes of determining Renewal Pricing if the registrar has provided Registry Operator with documentation that demonstrates that the applicable registrant expressly agreed in its registration agreement with registrar to higher Renewal Pricing at the time of the initial registration of the domain name following clear and conspicuous disclosure of such Renewal Pricing to such registrant, and (ii) discounted Renewal Pricing pursuant to a Qualified Marketing Program (as defined below). The parties acknowledge that the purpose of this Section 2.10(c) is to prohibit abusive and/or discriminatory Renewal Pricing practices imposed by Registry Operator without the written consent of the applicable registrant at the time of the initial registration of the domain and this Section 2.10(c) will be interpreted broadly to prohibit such practices.

This means that Google is only allowed to increase a domain's renewal price if it also increases the renewal price of all other domains. If Google wants to charge more to renew a "premium" domain, the higher price must be clearly and conspicuously disclosed to the registrant at time of initial registration. This prevents Google from holding domains hostage: they can't set a low price and later increase it after your domain becomes popular.

(By displaying prices in lira instead of USD for forum.dev, did Google violate the "clear and conspicuous" disclosure requirement? I'm not sure, but if I were a registrar I would display prices in the currency charged by the registry to avoid misunderstandings like this.)

I wouldn't assume that the .com price caps will remain forever. .org used to have price caps too, before switching to the standard registry agreement in 2019. But even if .com switched to the standard agreement, we probably wouldn't see "premium" .com domains: at this point, every .com domain which would be considered "premium" has already been registered. And Verisign wouldn't be allowed to increase the renewal price of already-registered domains due to the need for disclosure at the time of initial registration.

There ain't no rules for ccTLDs (.io, .tv, .au, etc.)

It's important to note that registries for country-code TLDs (which is every 2-letter TLD) do not have enforceable registry agreements with ICANN. Instead, they are governed by their respective countries (or similar political entities), which can do as they please. They can sucker you in with a low price and then hold your domain hostage when it gets popular. If you register your domain in a banana republic because you think the TLD looks cool, and el presidente wants your domain to host his cat pictures, tough luck.

This is only scratching the surface of what's wrong with ccTLDs, but that's a topic for another blog post. Suffice to say, I do not recommend using ccTLDs unless all of the following are true:

- You live in the country which owns the ccTLD and don't plan on moving.

- You don't expect the region where you live to secede from the political entity which owns the ccTLD. (Just ask the British citizens who had .eu domains.)

- You trust the operator of the ccTLD to be fair and competent.

Further Complications

To make matters more confusing, sometimes when you buy a domain from a registrar, you're not getting it from the registry, but from an existing owner who is squatting the domain. In this case, you pay a large upfront cost to get the squatter to transfer the domain to you, after which the domain renews at the lower, registry-set price. It used to be fairly obvious when this was happening, as you'd transact directly with the squatter, but now several registrars will broker the transaction for you. The Google registrar calls these "aftermarket" domains, which I think is a good name, but other registrars call them "premium" domains, which is confusing because such domains may or may not be considered "premium" by the registry and subject to higher renewal prices.

Yet another confounding factor is that registrars sometimes steeply discount the initial registration fee, taking a loss in the hope of making it up with renewals and other services.

To sum up, there are multiple scenarios you may face when buying a domain:

| Scenario | Initial Fee | Renewal Fee |

|---|---|---|

| Non-premium domain, no discount | $$ | $$ |

| Non-premium domain, first year discount | $ | $$ |

| Premium domain, no discount | $$$ | $$$ |

| Premium domain, first year discount | $$ | $$$ |

| Aftermarket non-premium domain | $$$$ | $$ |

| Aftermarket premium domain | $$$$ | $$$ |

| ccTLD domain | Varies | Sky's the limit! |

I was curious how different registrars distinguish between these cases, so I tried searching for the following domains at Gandi, GoDaddy, Google, Namecheap, and name.com:

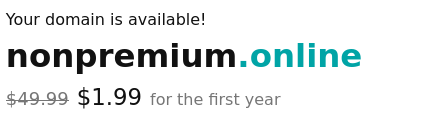

- safkjfkjfkjfdkjdfkj.com and nonpremium.online - decidedly non-premium domains

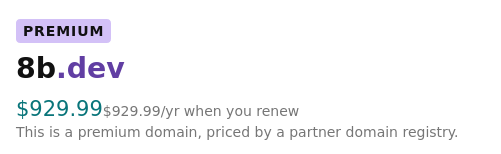

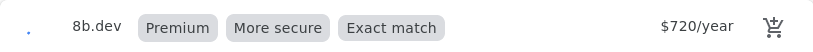

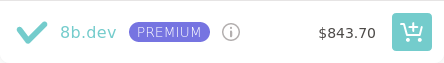

- 8b.dev - premium domain

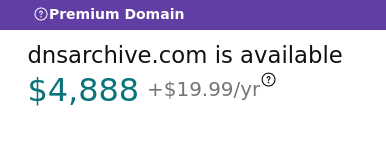

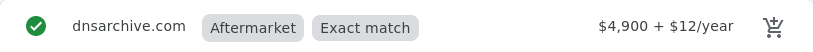

- dnsarchive.com - aftermarket domain

Gandi

Non-premium domain, no discount:

Non-premium domain, first year discount:

Premium domain:

Gandi does not seem to sell aftermarket domains.

GoDaddy

Non-premium domain, first year discount:

Premium domain:

Aftermarket domain:

Non-premium domain, no discount:

Premium domain:

Aftermarket domain:

Namecheap

Non-premium domain, first year discount:

Premium domain:

Aftermarket domain:

name.com

Non-premium domain, first year discount:

Premium domain:

Aftermarket domain:

Thoughts

I think Gandi and Google do the best job conveying the first year and renewal prices using clear and consistent UI. Unfortunately, since the publication of this post, Gandi has been acquired by another company, and Google is discontinuing their registrar service. Namecheap is the worst, only showing a clear renewal price when it's less than the initial price, but obscuring it when it's the same or higher (note the use of the term "Retail" instead of "Renews at", and the lack of a "/yr" suffix for the 8b.dev price). name.com also obscures the renewal price for nonpremium.online (I very much doubt it's $1.99). GoDaddy also fails to show a clear renewal price for the non-premium domains, but at least says the quoted price is "for the first year."

My advice is to pay very close attention to the renewal price when buying a domain, because it may be the same, lower, or higher than the first year's fee. And be very wary of 2-letter TLDs (ccTLDs).

December 1, 2022

Checking if a Certificate is Revoked: How Hard Can It Be?

This wasn't my first rodeo so I knew it would be hard. And I was right! The only question was what flavor of dysfunction I'd be encountering.

SSLMate's Certificate Transparency Search API now returns two new fields that tell you if, why, and when the certificate was revoked:

"revoked":true,

"revocation":{"time":"2021-10-27T21:38:48Z","reason":0,"checked_at":"2022-10-18T14:49:56Z"},

(See the complete API response)

This simple-sounding feature was obnoxious to implement, and required dealing with some amazingly creative screwups by certificate authorities, and a clunky system called the Common CA Database that's built on Salesforce. Just how dysfunctional is the WebPKI? Buckle up and find out!

Background on Certificate Revocation

There are two ways for a CA to publish that a certificate is revoked: the online certificate status protocol (OCSP), and certificate revocation lists (CRLs).

With OCSP, you send an HTTP request to the CA's OCSP server asking, "hey is the certificate with this serial number revoked?" and the CA is supposed to respond "yeah" or "nah", but often responds with "I dunno" or doesn't respond at all. CAs are required to support OCSP, and it's easy to find a CA's OCSP server (the URL is included in the certificate itself) but I didn't want to use it for the CT Search API: each API response can contain up to 100 certificates, so I'd have to make up to 100 OCSP requests just to build a single response. Given the slowness and unreliability of OCSP, that was a no go.

With CRLs, the CA publishes one or more lists of all revoked serial numbers.

This would be much easier to deal with: I could write a cron job to download every

CRL, insert all the entries into my trusty PostgreSQL database, and then building

a CT Search API response would be as simple as JOINing with the crl_entry table!

Historically, CRLs weren't an option because not all CAs published CRLs, but on October 1, 2022, both Mozilla and Apple began requiring all CAs in their root programs to publish CRLs. Even better, they required CAs to disclose the URLs of their CRLs in the Common CA Database (CCADB), which is available to the public in the form of a chonky CSV file. Specifically, two new columns were added to the CSV: "Full CRL Issued By This CA", which is populated with a URL if the CA publishes a single CRL, and "JSON Array of Partitioned CRLs", which is populated with a JSON array of URLs if the CA splits its list of revoked certificates across multiple CRLs.

So I got to work writing a cron job in Go that would 1) download and parse the CCADB CSV file to determine the URL of every CRL 2) download, parse, and verify every CRL and 3) insert the CRL entries into PostgreSQL.

How hard could this be?

This wasn't my first rodeo so I knew it would be hard. And I was right! The only question was what flavor of dysfunction I'd be encountering.

CCADB Sucks

The CCADB is a database run by Mozilla that contains information about publicly-trusted certificate authorities. The four major browser makers (Mozilla, Apple, Chrome, and Microsoft) use the CCADB to keep track of the CAs which are trusted by their products.

CCADB could be a fairly simple CRUD app, but instead it's built on

Salesforce, which means it's actual crud. CAs use a clunky enterprise-grade UI to update their information, such as to disclose

their CRLs. Good news: there's an API. Bad news: here's how to get API credentials:

Salesforce will redirect to the callback url (specified in 'redirect_uri'). Quickly freeze the loading of the page and look in the browser address bar to extract the 'authorization code', save the code for the next steps.

To make matters worse, CCADB's data model is wrong (it's oriented around certificates rather than subject+key) which means the same information about a CA needs to be entered in multiple places. There is very little validation of anything a CA inputs. Consequentially, the information in the CCADB is often missing, inconsistent, or just flat out wrong.

In the "Full CRL Issued By This CA" column, I saw:

- URLs without a protocol

- Multiple URLs

- The strings "expired" and "revoked"

Meanwhile, the data for "JSON Array of Partitioned CRLs" could be divided into three categories:

- The empty array (

[]). - A comma-separated list of URLs, with no square brackets or quotes.

- A comma-separated list of URLs, with square brackets but without quotes.

In other words, the only well-formed JSON in sight was the empty array.

Initially, I assumed that CAs didn't know how to write non-trivial JSON, because that seems like a skill they would struggle with. Turned out that Salesforce was stripping quotes from the CSV export. OK, CAs, it's not your fault this time. (Well, except for the one who left out square brackets.) But don't get too smug, CAs - we haven't tried to download your CRLs yet.

(The CSV was eventually fixed, but to unblock my

progress I had to parse this column with a mash of strings.Trim and strings.Split. Even Mozilla

had to resort to such hacks to parse their own CSV file.)

CAs Suck

Once I got the CCADB CSV parsed successfully, it was time to download some CRLs! Surely, this would be easy - even though CRLs weren't mandatory before October 1, the vast majority of CAs had been publishing CRLs for years, and plenty of clients were already consuming them. Surely, any problems would have been discovered and fixed by now, right?

Ah hah hah hah hah.

I immediately ran into some fairly basic issues, like Amazon's CRLs returning a 404 error, D-TRUST encoding CRLs as PEM instead of DER, or Sectigo disclosing a CRL with a non-existent hostname because they forgot to publish a DNS record, as well as some more... interesting issues:

GoDaddy

Since root certificate keys are kept offline, CRLs for root certificates

have to be generated manually during a signing ceremony.

Signing ceremonies are extremely serious affairs that involve donning

ceremonial robes, entering a locked cage, pulling a laptop out of a safe,

and manually running openssl commands based on a script - and not the

shell variety, but the reams of dead tree variety. Using the

openssl command is hell in the best of circumstances - now imagine

doing it from inside a cage. The smarter CAs write dedicated ceremony tooling

instead of using openssl. The rest bungle ceremonies on the regular,

as GoDaddy did here when they generated CRLs with an obsolete version number and missing a required extension, which consequentially couldn't be parsed by Go. To GoDaddy's credit,

they are now planning to switch to dedicated

ceremony tooling. Sometimes things do get better!

GlobalSign

Instead of setting the CRL signature algorithm based on the algorithm of the issuing CA's key, GlobalSign was setting it based on the algorithm of the issuing CA's signature. So when an elliptic curve intermediate CA was signed by an RSA root CA, the intermediate CA would produce CRLs that claimed to have RSA signatures even though they were really elliptic curve signatures.

After receiving my report, GlobalSign fixed their logic and added a test case.

Google Trust Services

Here is the list of CRL revocation reason codes defined by RFC 5280:

CRLReason ::= ENUMERATED {

unspecified (0),

keyCompromise (1),

cACompromise (2),

affiliationChanged (3),

superseded (4),

cessationOfOperation (5),

certificateHold (6),

-- value 7 is not used

removeFromCRL (8),

privilegeWithdrawn (9),

aACompromise (10) }

And here is the protobuf enum that Google uses internally for revocation reasons:

enum RevocationReason {

UNKNOWN = 0;

UNSPECIFIED = 1;

KEYCOMPROMISE = 2;

CACOMPROMISE = 3;

AFFILIATIONCHANGED = 4;

SUPERSEDED = 5;

CESSATIONOFOPERATION = 6;

CERTIFICATEHOLD = 7;

PRIVILEGEWITHDRAWN = 8;

AACOMPROMISE = 9;

}

As you can see, the reason code for unspecified is 0, and the protobuf enum value for unspecified is 1. The reason code for keyCompromise is 1 and the protobuf enum value for keyCompromise is 2. Therefore, by induction, all reason codes are exactly one less than the protobuf enum value. QED.

That was the logic of Google's code, which generated CRL reason codes by subtracting one from the protobuf enum value, instead of using a lookup table or switch statement. Of course, when it came time to revoke a certificate for the reason "privilegeWithdrawn", this resulted in a reason code of 7, which is not a valid reason code. Whoops.

At least this bug only materialized a few months ago, unlike most of the other CAs mentioned here, who had been publishing busted CRLs for years.

After receiving my report, Google fixed the CRL and added a test case, and will contribute to CRL linting efforts.

Conclusion

There are still some problems that I haven't investigated yet, but at this point, SSLMate knows the revocation status of the vast majority of publicly-trusted SSL certificates, and you can access it with just a simple HTTP query.

If you need to programmatically enumerate all the SSL certificates for a domain, such as to inventory your company's SSL certificates, then check out SSLMate's Certificate Transparency Search API. I don't know of any other service that pulls together information from over 40 Certificate Transparency logs and 3,500+ CRLs into one JSON API that's queryable by domain name. Best of all, I stand between you and all the WebPKI's dysfunction, so you can work on stuff you actually like, instead of wrangling CSVs and debugging CRL parsing errors.

May 18, 2022

Parsing a TLS Client Hello with Go's cryptobyte Package

In my original post about SNI proxying,

I showed how you can parse a TLS Client Hello message (the first message that the client sends

to the server in a TLS connection) in Go using an amazing hack that

involves calling tls.Server with a read-only net.Conn wrapper and a

GetConfigForClient callback that saves the tls.ClientHelloInfo argument.

I'm using this hack in snid, and if you accessed this blog post over IPv4,

it was used to route your connection.

However, it's pretty gross, and only gives me access to the parts of

the Client Hello message that are exposed in the tls.ClientHelloInfo struct. So I've decided to parse the Client Hello properly, using the

golang.org/x/crypto/cryptobyte package,

which is a great library

that makes it easy parse length-prefixed binary messages, such as those

found in TLS.

cryptobyte was added to Go's quasi-standard x/crypto library in 2017.

Since then, more and more parts of Go's TLS and X.509 libraries have been updated

to use cryptobyte for parsing, often leading to

significant performance gains.

In this post, I will show you how to use cryptobyte to parse a TLS Client Hello message, and introduce https://tlshello.agwa.name, an HTTP server that returns a JSON representation of the Client Hello message sent by your client.

Using cryptobyte

The cryptobyte parser is centered around the cryptobyte.String type, which is just a slice of bytes that points to the message that

you are parsing:

type String []byte

cryptobyte.String contains methods that read a part of the message and

advance the slice to point to the next part.

For example, let's say you have a message consisting of a variable-length string prefixed by a 16-bit big-endian length, followed by a 32-bit big-endian integer:

First, you create a cryptobyte.String variable, message, which

points to the above bytes.

Then, to read the name, you use ReadUint16LengthPrefixed:

var name cryptobyte.String

message.ReadUint16LengthPrefixed(&name)

ReadUint16LengthPrefixed reads two things. First, it reads the 16-bit length.

Second, it reads the number of bytes specified by the length. So, after the above function call,

name points to the 6 byte string "Andrew", and message

is mutated to point to the remaining 4 bytes containing the ID.

To

read the ID, you use ReadUint32:

var id uint32

message.ReadUint32(&id)

After this call, id contains 5961228 (0x5AF60C) and message is empty.

Note that cryptobyte.String's methods return a bool indicating if the read was successful.

In real code, you'd want to check the return value and return an error if necessary.

It's also a good idea to call Empty

to make sure that the string

is really empty at the end, so you can detect and reject trailing garbage.

cryptobyte.String's methods are generally zero-copy. In the above

example, name will point to the same memory region which message originally

pointed to. This makes cryptobyte very efficient.

Parsing the TLS Client Hello

Let's write a function that takes the bytes of a TLS Client Hello handshake message as input, and returns a struct with info about the TLS handshake:

func UnmarshalClientHello(handshakeBytes []byte) *ClientHelloInfo

We start by constructing a cryptobyte.String from handshakeBytes:

handshakeMessage := cryptobyte.String(handshakeBytes)

For guidance, we turn to Section 4 of RFC 8446, which describes TLS 1.3's handshake protocol.

Here's the definition of a handshake message:

struct {

HandshakeType msg_type; /* handshake type */

uint24 length; /* remaining bytes in message */

select (Handshake.msg_type) {

case client_hello: ClientHello;

case server_hello: ServerHello;

case end_of_early_data: EndOfEarlyData;

case encrypted_extensions: EncryptedExtensions;

case certificate_request: CertificateRequest;

case certificate: Certificate;

case certificate_verify: CertificateVerify;

case finished: Finished;

case new_session_ticket: NewSessionTicket;

case key_update: KeyUpdate;

};

} Handshake;

The first field in the message is a HandshakeType, which is an enum defined as:

enum {

client_hello(1),

server_hello(2),

new_session_ticket(4),

end_of_early_data(5),

encrypted_extensions(8),

certificate(11),

certificate_request(13),

certificate_verify(15),

finished(20),

key_update(24),

message_hash(254),

(255)

} HandshakeType;

According to the above definition, a Client Hello message has a value of 1. The last entry of the

enum specifies the largest possible value of the enum. In TLS, enums are

transmitted as a big-endian integer using the smallest number

of bytes needed to represent the largest possible enum value. That's 255,

so HandshakeType is transmitted as an 8-bit integer. Let's read

this integer and verify that it's 1:

var messageType uint8

if !handshakeMessage.ReadUint8(&messageType) || messageType != 1 {

return nil

}

The second field, length, is a 24-bit integer specifying the number of bytes remaining in the message.

The third and last field depends on the type of handshake message.

Since it's a Client Hello message, it has type ClientHello.

Let's read these two fields using ReadUint24LengthPrefixed and then make sure there are no

bytes remaining in handshakeMessage:

var clientHello cryptobyte.String

if !handshakeMessage.ReadUint24LengthPrefixed(&clientHello) || !handshakeMessage.Empty() {

return nil

}

clientHello now points to the bytes of the ClientHello structure, which is defined in Section 4.1.2 as follows:

struct {

ProtocolVersion legacy_version;

Random random;

opaque legacy_session_id<0..32>;

CipherSuite cipher_suites<2..2^16-2>;

opaque legacy_compression_methods<1..2^8-1>;

Extension extensions<8..2^16-1>;

} ClientHello;

The first field is legacy_version, whose type is defined as a 16-bit integer:

uint16 ProtocolVersion;

To read it, we do:

var legacyVersion uint16

if !clientHello.ReadUint16(&legacyVersion) {

return nil

}

Next, random, whose type is defined as:

opaque Random[32];

That means it's an opaque sequence of exactly 32 bytes. To read it, we do:

var random []byte

if !clientHello.ReadBytes(&random, 32) {

return nil

}

Next, legacy_session_id. Like random, it is an opaque sequence of

bytes, but this time the RFC specifies the length as a range,

<0..32>. This syntax means it's a variable-length sequence that's between 0

and 32 bytes long, inclusive. In TLS, the length is transmitted just before the byte sequence as

a big-endian integer using the smallest number of bytes necessary to represent the largest possible length.

In this case, that's one byte, so we can read legacy_session_id using

ReadUint8LengthPrefixed:

var legacySessionID []byte

if !clientHello.ReadUint8LengthPrefixed((*cryptobyte.String)(&legacySessionID)) {

return nil

}

Now we're on to cipher_suites, which is where things start to get

interesting. As with legacy_session_id, it's a variable-length sequence,

but rather than being a sequence of bytes, it's a sequence of CipherSuites,

which is defined as a pair of 8-bit integers:

uint8 CipherSuite[2];

In TLS, the length of the sequence is specified in bytes,

rather than number of items. For cipher_suites, the largest possible

length is just shy of 2^16, which means a 16-bit integer is used, so we'll

use ReadUint16LengthPrefixed to read the cipher_suites field:

var ciphersuitesBytes cryptobyte.String

if !clientHello.ReadUint16LengthPrefixed(&ciphersuitesBytes) {

return nil

}

Now we can iterate to read each item:

for !ciphersuitesBytes.Empty() {

var ciphersuite uint16

if !ciphersuitesBytes.ReadUint16(&ciphersuite) {

return nil

}

// do something with ciphersuite, like append to a slice

}

Next, legacy_compression_methods, which is similar to legacy_session_id:

var legacyCompressionMethods []uint8

if !clientHello.ReadUint8LengthPrefixed((*cryptobyte.String)(&legacyCompressionMethods)) {

return nil

}

Finally, we reach the extensions field, which is another variable-length

sequence, this time containing the Extension struct, defined as:

struct {

ExtensionType extension_type;

opaque extension_data<0..2^16-1>;

} Extension;

ExtensionType is an enum with maximum value 65535 (i.e. a 16-bit integer).

As with cipher_suites, we read all the bytes in the field into a cryptobyte.String:

var extensionsBytes cryptobyte.String

if !clientHello.ReadUint16LengthPrefixed(&extensionsBytes) {

return nil

}

Since this is the last field, we want to make sure clientHello is now empty:

if !clientHello.Empty() {

return nil

}

Now we can iterate to read each Extension item:

for !extensionsBytes.Empty() {

var extType uint16

if !extensionsBytes.ReadUint16(&extType) {

return nil

}

var extData cryptobyte.String

if !extensionsBytes.ReadUint16LengthPrefixed(&extData) {

return nil

}

// Parse extData according to extType

}

And that's it! You can see working code, including parsing of several common extensions, in my tlshacks package.

tlshello.agwa.name

To test this out, I wrote an HTTP server that returns a JSON representation of the Client Hello. This is rather handy for checking what ciphers and extensions a client supports. You can check out what your client's Client Hello looks like at https://tlshello.agwa.name.

Making the Client Hello message available to an HTTP handler required some gymnastics, including

writing a net.Conn wrapper struct that peeks at the first TLS handshake message and saves it

in the struct, and then a ConnContext callback that grabs the saved message out of the wrapper struct and makes it available in the request's context. You can read the code if you're curious.

I'm happy to say that deploying this HTTP server was super easy thanks to snid. This service cannot run behind an HTTP reverse proxy - it has to terminate the TLS connection itself. Without snid, I would have needed to use a dedicated IPv4 address.

April 15, 2022

How I'm Using SNI Proxying and IPv6 to Share Port 443 Between Webapps

My preferred method for deploying webapps is to have the webapp listen directly on port 443, without any sort of standalone web server or HTTP reverse proxy in front. I have had it with standalone web servers: they're all over-complicated and I always end up with an awkward bifurcation of logic between my app's code and the web server's config. Meanwhile, my preferred language, Go, has a high-quality, memory-safe HTTPS server in the standard library that is well suited for direct exposure on the Internet.

However, only one process at a time can listen on a given IP address and port number. In a world of ubiquitous IPv6, this wouldn't be a problem - each of my servers has literally trillions of IPv6 addresses, so I could easily dedicate one IPv6 address per webapp. Unfortunately, IPv6 is not ubiquitous, and due to the shortage of IPv4 addresses, it would be too expensive to give each app its own IPv4 address.

The conventional solution for this problem is HTTP reverse proxying, but I want to do better. I want to be able to act like IPv6 really is ubiquitous, but continue to support IPv4-only clients with a minimum amount of complexity and mental overhead. To accomplish this, I've turned to SNI-based proxying.

I've written about SNI proxying before, but in a nutshell: a proxy server can use the first message in a TLS connection (the Client Hello message, which is unencrypted and contains the server name (SNI) that the client wants to connect to) to decide where to route the connection. Here's how I'm using it:

- My webapps listen on port 443 of a dedicated IPv6 address. They do not listen on IPv4.

- Each of my servers runs snid, a Go daemon which listens on port 443 of the server's single IPv4 address.

- When snid receives a connection, it peeks at the first TLS message to get the desired server name. It does a DNS lookup for the server name's IPv6 address, and proxies the TCP connection there. To prevent snid from being used as an open proxy, snid only forwards the connection if the IPv6 address in within my server's IPv6 range.

- The AAAA record for a webapp is the dedicated IPv6 address, and the A record is the shared IPv4 address. Thus, IPv6 clients connect directly to the webapp, while IPv4 clients are proxied via snid.

Preserving the Client's IP Address

One of the headaches caused by proxies is that the backend doesn't see the client's IP address - connections appear to be from the proxy server instead. With HTTP proxying, this problem is typically solved by stuffing the client's IP address in a header field, which is a minefield of security problems that allow client IP addresses to be spoofed if you're not careful. With TCP proxying, a common solution is to use the PROXY protocol, which puts the client's IP address at the beginning of the proxied connection. However, this requires backends to understand the PROXY protocol.

snid can do better. Since IPv6 addresses are 128 bits long, but IPv4 addresses are only 32 bits, it's possible to embed IPv4 addresses in IPv6 addresses. snid embeds the client's IP address in the lower 32 bits of the source address which it uses to connect to the backend. It's trivial for the backend to translate the source address back to the IPv4 address, but this is purely a user interface concern. If a backend doesn't do the translation, it's possible for the human operator to do the translation manually when viewing log entries, configuring access control, etc.

For the IPv6 prefix, I use 64:ff9b:1::/48, which is a non-publicly-routed prefix reserved for IPv4/IPv6 translation mechanisms. For example, the IPv4 address 192.0.2.10 translates to:

64:ff9b:1::c000:20a

Conveniently, it can also be written using embedded IPv4 notation:

64:ff9b:1::192.0.2.10

O(1) Config

snid's configuration is just a few command line arguments. Here's the command line for the instance of snid that's running on the server that serves www.agwa.name and src.agwa.name:

snid -listen tcp:18.220.42.202:443 -mode nat46 -nat46-prefix 64:ff9b:1:: -backend-cidr 2600:1f16:719:be00:5ba7::/80

-listen tells snid to listen on port 443 of 18.220.42.202,

which is the IPv4 address for www.agwa.name and src.agwa.name.

-mode nat46 tells snid to forward connections over IPv6,

with the source IPv4 address embedded using the prefix specified by

-nat46-prefix. -backend-cidr tells snid to only

forward connections to addresses within the 2600:1f16:719:be00:5ba7::/80

subnet, which includes the IPv6 addresses for www.agwa.name and

src.agwa.name (2600:1f16:719:be00:5ba7::2 and 2600:1f16:719:be00:5ba7::1,

respectively).

The best thing about snid's configuration is that I only have to touch it once. I don't have to change it when deploying new webapps. Deploying a new webapp only requires assigning it an IPv6 address and publishing DNS records for it, just like it would be in my dream world of ubiquitous IPv6. I call this O(1) configuration since it doesn't get longer or more complex with the number of webapps I run.

Guaranteed Secure

HTTP reverse proxying is a minefield of security concerns. In addition to the IP address spoofing problems discussed above, you have to contend with request smuggling and HTTP desync vulnerabilities. This is a class of vulnerability that will never truly be solved: you can patch vulnerabilities as they're discovered, but thanks to the inherent ambiguity in parsing HTTP, you can never be sure there won't be more.

I don't have to worry about any of this with snid. Since snid doesn't decrypt the TLS connection (and lacks the necessary keys to do so), proxying with snid is guaranteed to be secure as long as TLS is secure. It can harm security no more than any untrusted router on the Internet can. This helps put snid out of my mind so I can forget that it even exists.

Compatible with ACME ALPN

Since ACME's TLS-ALPN challenge uses SNI to convey the hostname being validated, snid will forward TLS-ALPN requests from the certificate authority to the appropriate backend. Automatic certificate acquisition, such as with Go's autocert package, Just Works.

What About Encrypted Client Hello?

Since the SNI hostname is in plaintext, a network eavesdropper can determine what hostname a client is connecting to. This is bad for privacy and censorship resistance, so there is an effort underway to encrypt not just the SNI hostname, but the entire Client Hello message. How does this affect snid?

First, it's important to note that the destination IP address in the IP header is always going to be unencrypted, so by putting my webapps on different IPv6 addresses, I'm giving eavesdroppers the ability to find out which webapp clients are connecting to, regardless of SNI. However, a single webapp might handle multiple hostnames, and I'd like to hide the specific hostname from eavesdroppers, so Encrypted Client Hello still has some value. Fortunately, Encrypted Client Hello works with snid.

Encrypted Client Hello doesn't actually encrypt the initial Client Hello message. It's still sent in the clear, but with a decoy SNI hostname. The actual Client Hello message, with the true SNI hostname, is encrypted and placed in an extension of the unencrypted Client Hello. To make Encrypted Client Hello work with snid, I just need to ensure that the decoy SNI hostname resolves to the IPv6 address of the backend server. snid will see this hostname and route the connection to the correct backend server, as usual. The backend will decrypt the true Encrypted Client Hello to determine which specific hostname the client wants.

For additional detail about this approach, see my comment on Hacker News.

What About Port 80?

Obviously, I can't proxy unencrypted HTTP traffic using SNI-based proxying. But at this

point, port 80 exists solely to redirect clients to HTTPS. To handle

this, I plan to run a tiny, zero-config daemon on port 80 of all IPv4

and IPv6 addresses that will redirect the client to the same URL

but with http:// replaced with https://.

(For now, I'm using Apache for this.)

Installing snid

If you have Go, you can install snid by running:

go install src.agwa.name/snid@latestYou can also download a statically-linked binary.

See the README for the command line usage.

Rejected Approach: UNIX Domain Sockets

Before settling on the approach described above, I had snid listen on port

443 of all interfaces (both IPv4 and IPv6) and forward connections to a

UNIX domain socket whose path contained the SNI hostname. For example, connections

to example.com would be forwarded to /var/tls/example.com. The client's

IP address was preserved using the PROXY protocol.

This had some nice properties. I could use filesystem permissions to

control who was allowed to create sockets, either by setting permissions

on /var/tls, or by symlinking specific hostnames under /var/tls to other

locations on the filesystem which non-root users could write to.

It felt really elegant that applications could listen

on an SNI hostname rather than on an IP address and port.

However, few server applications support the PROXY protocol or

listening on UNIX domain sockets. I could make sure my

own apps had support, but I really wanted to be able to use off-the-shelf

apps with snid. I did write an amazing LD_PRELOAD library that

intercepts the bind system call and transparently replaces binding

to a TCP port with binding to a UNIX domain socket. It even intercepts

getpeername and makes it returns the IP address received via the PROXY protocol.

Although this worked with every application I tried it with, it felt like a hack.

Additionally, UNIX domain sockets have some annoying semantics: if the socket file already exists (perhaps because the application crashed without removing the socket file), you can't bind to it - even if no other program is actually bound to it. But if you remove the socket file, any program bound to it continues running, completely unaware that it will never again accept a client. The semantics of TCP port binding feel more robust in comparison.

For these reasons I switched to the IPv6 approach described above, allowing

me to use standard, unmodified TCP-listening server apps without any hacks that

might compromise robustness. However, support for UNIX domain sockets

lives on in snid with the -mode unix flag.

January 19, 2022

Comcast Shot Themselves in the Foot with MTA-STS

I recently heard from someone, let's call them Alex, who was unable to email comcast.net addresses. Alex's emails were being bounced back with an MTA-STS policy error:

MX host mx2h1.comcast.net does not match any MX pattern in MTA-STS policy

MTA-STS failure for Comcast.net: Validation error (E_HOST_MISMATCH)

MX host mx1a1.comcast.net does not match any MX pattern in MTA-STS policy

MTA-STS failure for Comcast.net: Validation error (E_HOST_MISMATCH)

MTA-STS is a relatively new standard that allows domain owners such as Comcast to opt in to authenticated encryption for their mail servers. (By default, SMTP traffic between mail servers uses opportunistic encryption, which can be defeated by active attackers to intercept email.) MTA-STS requires the domain owner to duplicate their MX record (the DNS record that lists a domain's mail servers) in a text file served over HTTPS. Sending mail servers, like Alex's, refuse to contact mail servers that aren't listed in the MTA-STS text file. Since HTTPS uses authenticated encryption, the text file can't be altered by active attackers. (In contrast, the MX record is vulnerable to manipulation unless DNSSEC is used, but people don't like DNSSEC which is why MTA-STS was invented.)

The above error messages mean that although mx2h1.comcast.net and

mx1a1.comcast.net are listed in comcast.net's MX record, they are not

listed in comcast.net's MTA-STS policy file. Consequentially, Alex's

mail server thinks that the MX record was altered by attackers, and is

refusing to deliver mail to what it assumes are rogue mail servers.

However, mx2h1.comcast.net and mx1a1.comcast.net are

not rogue mail servers. They are in fact listed in Comcast's

current MTA-STS policy:

version: STSv1

mode: enforce

mx: mx2c1.comcast.net

mx: mx2h1.comcast.net

mx: mx1a1.comcast.net

mx: mx1h1.comcast.net

mx: mx1c1.comcast.net

mx: mx2a1.comcast.net

max_age: 2592000

This means that Alex's mail server is not consulting comcast.net's

current MTA-STS policy. Instead, it's consulting a cached policy which

does not list mx2h1.comcast.net and mx1a1.comcast.net.

This can happen because mail servers cache MTA-STS policies to avoid

having to re-download an unchanged policy file every time an email is

sent. To determine whether a domain's policy has changed, mail servers

query the domain's _mta-sts TXT record (e.g. _mta-sts.comcast.net), and

only re-download the MTA-STS policy file if the ID in the TXT record is

different from the ID of the currently-cached policy.

The obvious implication of the above is that if you ever change your domain's MTA-STS policy, you have to remember to update the TXT record as well.

A more subtle implication is that you have to do the updates in the right order. If you update the TXT record before changing the policy file, and a mail server fetches the policy in the intervening time, it will download the old policy file but cache it under the new ID. It won't ever download the new policy because it thinks it already has it in its cache.

This pitfall could have been avoided had MTA-STS required the ID to also be specified in the policy file instead of just in the TXT record. That would have prevented mail servers from caching policies under the wrong ID.

There's some evidence that this is what happened with comcast.net.

The ID in the _mta-sts.comcast.net TXT record appears to be a UNIX

timestamp (seconds since the Epoch):

_mta-sts.comcast.net. 7200 IN TXT "v=STSv1; id=1638997389;"

That timestamp translates to 2021-12-08 21:03:09 UTC.

However, the Last-Modified time of

https://mta-sts.comcast.net/.well-known/mta-sts.txt is three minutes

later:

Last-Modified: Wed, 08 Dec 2021 21:06:05 GMT

If the ID in the TXT record reflects when the TXT record was updated, there was a three minute gap between the updates. If Alex's mail server fetched comcast.net's MTA-STS policy during this window, it would have cached the old policy under the new ID, causing the errors seen above.

Recommendations for Domain Owners Who Use MTA-STS

You should automate MTA-STS policy publication to ensure that your MTA-STS policy always matches your MX records and that the TXT record is reliably updated, in the correct order, when your policy changes. If your policy file is served by a CDN, you have to be extra careful not to update the TXT record until your new policy file is fully propagated throughout the CDN.

I further recommend that you rotate the ID in the TXT record daily even if no changes have been made to your policy. This will force mail servers to re-download your policy file if it's more than a day old, which provides a backstop in case something goes wrong with the order of updates.

It may be tempting, but you should not reduce your policy's max_age value as this will

diminish your protection against active attackers who block retrieval of your policy.

Having a long max_age but a frequently rotating ID keeps your policy up-to-date

in mail servers but ensures that in an attack scenario mail servers will fail safe

by using a cached policy.

It's quite a bit of work to get this all right. If you want the easy option,

SSLMate will

automate all aspects of MTA-STS for you: all you need to do is publish two CNAME records

delegating the mta-sts and _mta-sts subdomains to SSLMate-operated servers and SSLMate takes

care of the rest.

Recommendations for Mail Server Developers

You should assume that domain operators are not going to properly update their TXT records and you should always attempt to re-download policy files that are more than a day old, regardless of what the ID in the TXT record says.

Is this Really Better than DNSSEC/DANE?

Thanks to MTA-STS' duplication of information and requirement for updates to be done in the right order, there is a high chance of human error when MTA-STS is deployed manually. Unfortunately, it's very likely to be deployed manually because there's a dearth of automation software, and on the surface it looks easy to manage by hand. To make matters worse, MTA-STS' caching semantics mean that the inevitable human error leads to hard-to-diagnose problems, such as a subset of mail servers being unable to mail your domain. I suspect that many problems will never be detected - email delivery will just become less reliable than it was before MTA-STS was deployed.

Meanwhile, DNSSEC is increasingly automated, and if you use a modern cloud provider like Route 53, Google Cloud DNS, or Cloudflare, you don't have to worry about remembering to sign zones before they expire, which was traditionally a major source of DNSSEC mistakes.

However, not all mail server operators support DNSSEC/DANE. Although Microsoft recently added DNSSEC/DANE support to Office 365 Exchange, Gmail only supports MTA-STS. Thus, there is still value in deploying MTA-STS despite its flaws. But we should not be happy about this state of affairs.